The Demo That Fooled Everyone

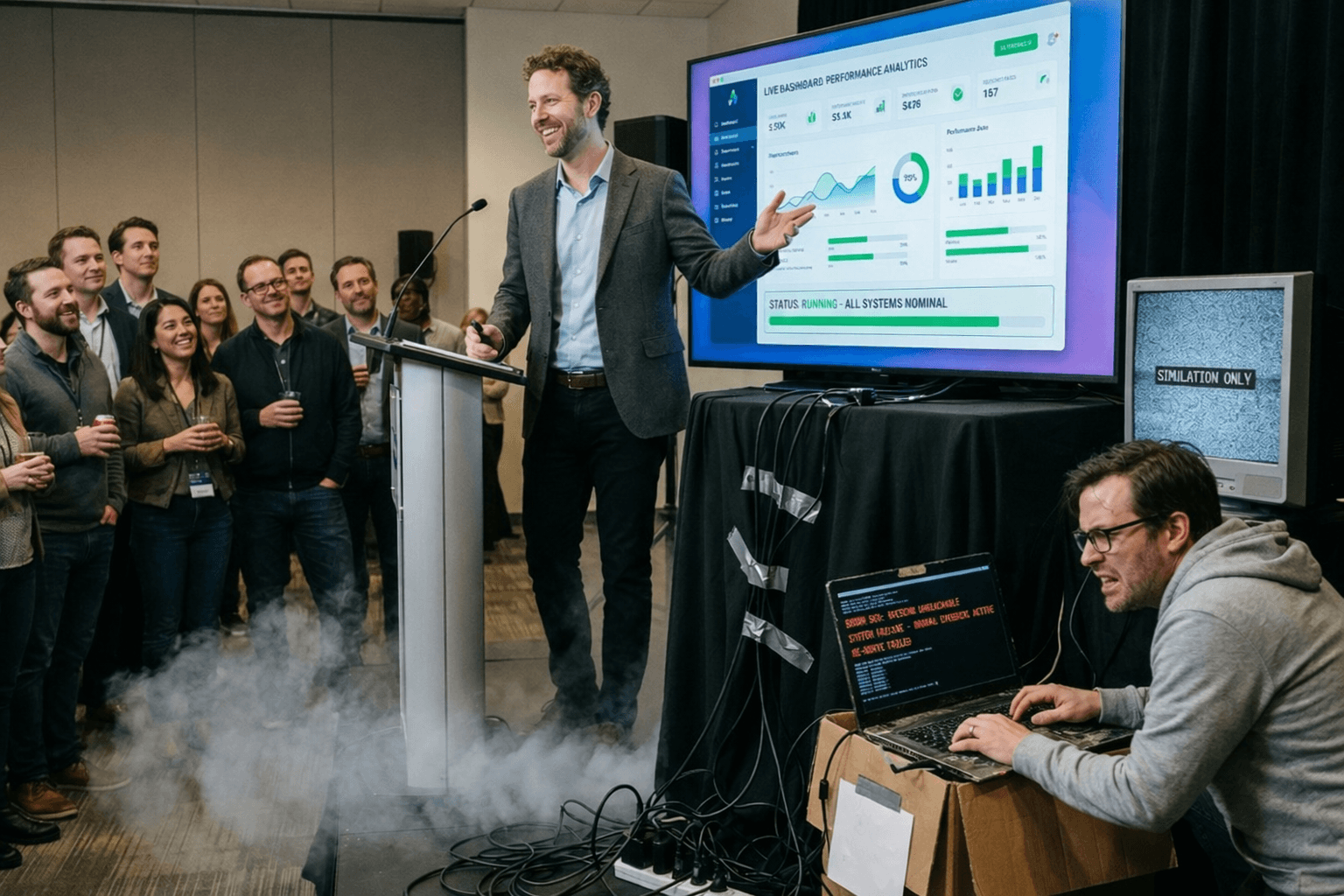

Picture a scene that has played out in conference rooms across enterprise and public sector organizations over the past eighteen months. A development team, armed with one of the new generation of agentic AI coding tools, spends an afternoon building what looks like a fully functional CRM workflow replacement. By end of day, they have a working interface, a data model, and a process automation layer. The demo is clean and visually credible. Stakeholders lean forward. Someone asks, half-seriously, whether the organization still needs Salesforce at all.

That question is the starting point for a conversation that matters, but the framing is wrong. Agentic AI coding tools are a genuine shift: unlike earlier code-completion assistants, these systems autonomously plan, execute, and interact with compilers, debuggers, and version control to iteratively perform complex development tasks. What the afternoon's demo does not show is whether that code can carry the operational weight of a production system that thousands of users depend on, that must remain available during a network incident, and that someone has to support two years from now. The more consequential question is not whether these tools can replace established platforms like Salesforce, Microsoft Dynamics 365 and Power Platform, or ServiceNow. It is how the two categories will converge.

What These Tools Actually Do Well

The value of agentic coding tools is not that they write better code than experienced engineers. It is that they collapse the feedback loop between concept and prototype to a timescale that changes how organizations think about requirements.

What historically consumed weeks of back-and-forth between business stakeholders and developers can now happen in hours. Developer surveys confirm that AI coding tools have become a standard part of the practitioner toolkit, with substantial reported productivity gains on code generation and prototyping tasks. Agentic systems extend this further: they can decompose high-level goals, coordinate multi-step processes, and adapt their approach based on intermediate feedback.

The analytical move that matters here is precision about where that capability begins and ends. UI generation, rapid workflow encoding, and rapid prototyping are genuine strengths. Scalability validation, robustness under sustained load, long-term reliability, and the accumulation of known failure modes are not, at least not yet, and not by default.

The Gap No Demo Can Close

Production readiness is not simply a higher bar of functional correctness. It is a qualitatively different property. Production-ready software includes monitoring, deployment automation, security controls, and the operational processes necessary to run reliably in real-world environments. It has been stressed beyond expected load to identify breaking points, and has surfaced latent defects, which are failures that do not manifest immediately but emerge over time under specific conditions. The Carnegie Mellon Software Engineering Institute's CMMI framework defines software maturity in terms of organizational process capability, measuring the degree to which development processes are established, repeatable, and improving across five maturity levels. NIST's software reliability engineering guidance addresses the corresponding principle at the software component level: reliability requires accumulating operational data and understanding failure mechanisms over time.

No amount of test coverage substitutes for the operational track record that production systems accumulate over years of real-world use.

The platforms organizations have built their operations on, including Salesforce, Dynamics 365, Power Platform, and ServiceNow, carry exactly this kind of accumulated trust. An organization replacing any of those platforms with software generated in an afternoon is not choosing a better tool. It is choosing to start the trust-accumulation process from scratch: under operational conditions, with real users and real data, and without the institutional knowledge of how the system behaves when things go wrong.

When the Code Looks Right but the System Is Wrong

The security dimension deserves specific attention, because it is more concrete and more immediate than most organizations realize. The limitations of AI-generated code extend beyond security vulnerabilities to broader code quality concerns: research on AI code-completion tools has found correlations between AI-assisted development and increased rates of lower-quality code being committed to repositories, as well as patterns that may discourage code reuse and increase long-term maintenance burden. These findings come from studies of earlier-generation tools and carry moderate confidence, but they point toward a dimension of risk that organizations should factor into their governance frameworks alongside the security vulnerability evidence.

On the security side specifically, the Cloud Security Alliance, a non-profit security organization, cites research finding that 62% of AI-generated code solutions contain design flaws or known security vulnerabilities, even when using the latest foundational models. A peer-reviewed systematic literature review found that AI code generation outputs are non-deterministic and that the percentage of secure code varies significantly across vulnerability class and programming language. A Stanford study, reported by TechCrunch, examined Codex, an earlier generation of AI code-completion tools, and found that engineers using such systems were more likely to introduce security vulnerabilities into the applications they developed. Subsequent research on more recent AI code generation models has consistently found the same directional pattern. Research by the Center for Security and Emerging Technology at Georgetown University adds a counterintuitive dimension: larger and more capable models do not necessarily produce more secure code, and the relationship between model scale and output security is not linear.

What this points to is not a reason to avoid these tools. It is a reason to be specific about the skills and discipline required to use them responsibly at enterprise scale. Getting AI to produce code that is robust, scalable, and non-fragile is not automatic. It requires engineers who can specify security and reliability requirements with enough precision to constrain the model's output before generation, not audit for problems afterward. Research from the University of Massachusetts Dartmouth found that prompting AI with explicit security requirements before generating code was significantly helpful in reducing vulnerabilities. In our experience, the developer who can reliably extract production-quality output from an agentic tool is not a less-skilled developer. They are a more disciplined one, with a clear mental model of what the tool will and will not get right without explicit guidance, a pattern that the research on explicit security specification requirements consistently supports. Organizations that treat agentic tools as a shortcut around engineering judgment will find the gap between demo and production wider than they expected.

The Platforms Were Never Standing Still

The premise that agentic AI tools are racing to displace established enterprise platforms misreads the competitive dynamics. These platforms have not been waiting to be disrupted. They have been absorbing the disruption into their architecture. Salesforce describes its Agentforce offering as bringing agentic AI to enterprise CRM, built on its core platform infrastructure. Microsoft describes Copilot integration across Power Platform and Dynamics 365 as enabling AI-assisted development natively within the platform stack. ServiceNow describes Now Assist as embedding agentic AI into enterprise service management workflows. All three are vendor-promoted descriptions, but the pattern is corroborated by independent market analysis: Salesforce and ServiceNow are competing directly on agentic AI capabilities, with both integrating these features natively.

The platforms most likely to be displaced by agentic AI are the ones that chose not to integrate it.

Two Models for Getting This Right

We see two convergence models taking shape in practice, and both are worth understanding in some detail because they have different implications for how organizations should sequence their investments.

The first uses AI-assisted rapid prototyping to drive iterative refinement toward a vetted specification, which is then implemented on proven platform foundations, specifically Salesforce for CRM, Power Platform for process automation, and ServiceNow for IT service management. The AI collapses the front end of the development lifecycle. The platform provides the production-grade infrastructure, compliance posture, and operational track record that the specification demands. What would previously have required months of requirements gathering and iterative specification work can be compressed into days of prototype-driven refinement, with the resulting specification handed off to a platform implementation that carries the operational weight.

The second model is architecturally distinct. Agentic AI tools handle the experience and workflow layer, covering the interfaces, user journeys, and process automation that users interact with, while the backend is built through more traditional development practices augmented by AI, or assembled from robust components that established platforms already provide. Both models share a common logic: AI-generated components carry weight where they can be validated quickly and where the cost of failure is recoverable, while proven platform components carry the load where failure is not recoverable. That second category includes data integrity, security, regulatory compliance, and sustained availability under production load. Far from rendering established platforms obsolete, both models position them as the infrastructure layer that makes AI-generated concepts production-ready.

From Prototype to Production

The organizations that will extract the most value from agentic AI coding tools are not the ones that use them to replace their platforms. They are the ones that use them to accelerate the work of building on those platforms — and who invest in what that actually requires.

That investment is more demanding than it first appears. Agentic tools do not slot into existing development workflows without friction. Using them effectively requires engineers to develop new instincts: how to specify requirements with enough precision to constrain model output, how to validate what the tools generate rather than simply accept it, and how to recognize the categories of problem — security, scalability, long-term maintainability — where human judgment cannot be delegated. This is not a matter of learning a new interface. It is a matter of learning a new way to develop software, one that demands more deliberate engineering discipline, not less. Organizations that treat adoption as a tooling decision rather than a capability-building investment tend to discover the gap between demo and production the hard way. That learning curve applies equally to the teams building internal systems and to the firms, like Spruce, developing software solutions for clients.

Alongside that investment in engineering practice, organizations need governance frameworks and architectural clarity to close the distance between what these tools generate and what production systems require. The NIST AI Risk Management Framework provides useful scaffolding for that governance work, and the trajectory of the major platforms themselves points toward convergence rather than displacement.

The question is not whether to use these tools. It is whether your organization has built the engineering discipline, the learning infrastructure, and the platform foundation to turn what they generate into something you can stake your operations on.

The end of SaaS is not coming. What is coming is a more capable and more iterative way of building on top of it — and the organizations that understand that distinction, and invest accordingly, will move faster and with less risk than those still debating the wrong question.

Sources

- Stack Overflow Developer Survey 2024. Stack Overflow.

- CMMI: Capability Maturity Model Integration. Carnegie Mellon University Software Engineering Institute.

- Software Reliability Engineering. National Institute of Standards and Technology.

- Understanding Security Risks in AI-Generated Code. Cloud Security Alliance.

- A Systematic Literature Review on the Impact of AI Models on Software Engineering. PMC.

- Code-Generating AI Can Introduce Security Vulnerabilities, Study Finds. TechCrunch.

- Security Analysis of Code Generated by AI. University of Massachusetts Dartmouth Graduate Thesis.

- Cybersecurity Risks of AI-Generated Code. Center for Security and Emerging Technology, Georgetown University. 2024.

- Salesforce Agentforce: Agentic AI for Enterprise CRM. Salesforce.

- Microsoft Copilot for Power Platform: AI-Assisted Low-Code Development. Microsoft.

- ServiceNow Now Assist: Agentic AI for Enterprise Workflows. ServiceNow.

- Salesforce, ServiceNow Heading For Agentic AI Battle. Investor's Business Daily.

- NIST AI Risk Management Framework. National Institute of Standards and Technology.