A missed appointment and a missed life change

On a weekday evening, a single mother balances a cracked smartphone on her knee and tries to apply for housing assistance. The site is technically "accessible." A screen reader could announce the form fields. The color contrast passes. Yet the language toggle stalls halfway through, leaving key instructions in English. The form assumes always-on broadband; images and scripts crawl on her prepaid data plan. A pop-up about data sharing feels vague and risky. After three attempts, she closes the tab and resolves to call tomorrow — which means taking time off work and risking another missed paycheck.

Many Americans are now effectively smartphone-first, and gaps in broadband access persist — especially among lower-income households — making latency, page weight, and mobile ergonomics more than cosmetic concerns, as detailed by the Pew Research Center's report on smartphone and broadband adoption and its ongoing Digital Divide research.

Technical compliance can mean a page is readable to a screen reader — it does not guarantee that a person will feel safe, understood, or able to finish what they came to do.

The limits of compliance

Accessibility rules matter. In the United States, the Department of Justice has specified WCAG 2.1 Level AA as the technical standard for state and local government websites and mobile apps — establishing a clear baseline that agencies must meet (ADA.gov Fact Sheet on the Web Rule). The U.S. Access Board's regulatory analyses put real structure around these obligations, clarifying compliance expectations and costs for information and communication technology in the federal sphere.

Yet a "passed audit" is not the same as a completed application, a renewed benefit, or a booked appointment. Compliance checklists are essential baselines, not finish lines, because the lived reality of access also depends on language, trust, digital literacy, privacy, and connectivity.

Standards protect the right to access — they do not ensure the right to complete, to trust, or to benefit.

Why accessibility alone falls short

For public services, the difference between "reachable" and "usable with dignity" hinges on dimensions that standards do not fully address:

- Demographics and socioeconomics shape who has time, devices, language support, and documents available in the moment.

- Devices and connectivity vary widely; a substantial share of people rely on smartphones and face bandwidth constraints that raise abandonment risk on heavy pages (Pew Research Center; Digital Divide research).

- Language and literacy barriers undermine comprehension even when interfaces are technically accessible.

- Digital literacy — knowing how to navigate forms, recover passwords, or upload documents — can be the deciding factor in completion.

- Privacy and trust concerns deter use; research shows that privacy violations measurably erode user trust, which can depress engagement and completion (ScienceDirect).

Municipal practice points to promising countermeasures: New York City embedded a Chief Privacy Officer role with mandates for anonymization guidance and privacy-by-design review to sustain trust in data practices. Those governance moves do not change keyboard focus order; they change whether people feel safe enough to proceed.

Accessibility that ignores language, trust, and real-world constraints leaves people equally excluded despite a "compliant" badge.

Designing for trust, language, and literacy

Inclusive services pair technical accessibility with design patterns that lower cognitive load and build confidence:

- Plain-language content and progressive disclosure cut jargon and present only what's needed, when it's needed — an approach encouraged in federal design guidance (U.S. Web Design System).

- Multilingual content requires more than a toggle. Machine translation can help teams scale, but human review remains essential to avoid errors that mislead or alienate users; W3C's guidance on AI and accessibility highlights quality and presentation risks when relying on automated outputs.

- Low-bandwidth, responsive performance is a matter of equity when smartphone dependence and data caps are common. Prioritizing page weight, caching, and graceful degradation directly serves populations documented by Pew as more likely to be mobile-only or bandwidth-constrained.

- Privacy-by-design — minimizing data collection, clarifying data use, and embedding controls — supports informed consent and has been linked, in both academic framing and municipal practice, to stronger user trust (INRIA/HAL; MDPI).

These choices reduce friction and uncertainty at the exact points where drop-off is most likely — comprehension, connection stability, and consent.

Human-in-the-loop and assisted channels

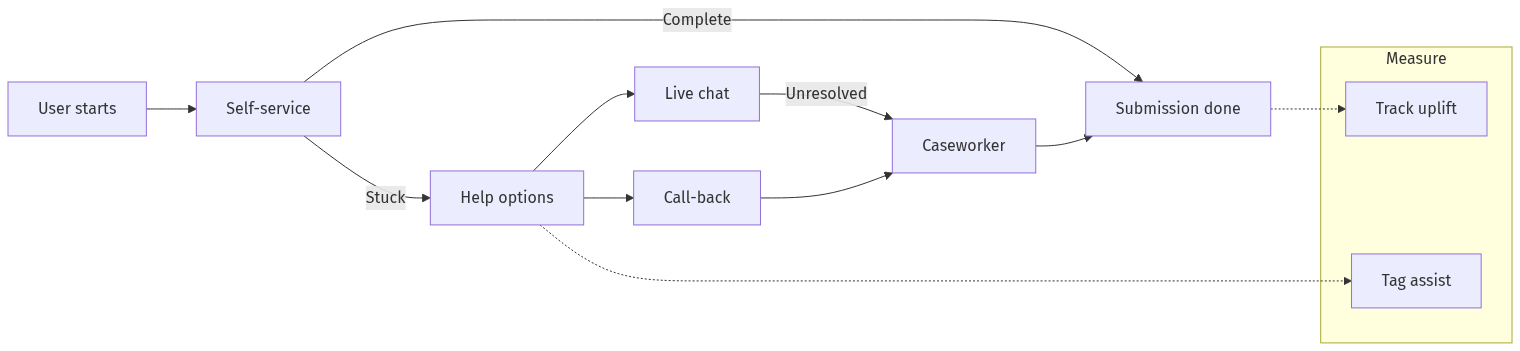

Even the best-designed flows benefit from timely human support. Assisted channels — live chat escalations, call-back options, warm transfers to caseworkers — are designed to catch edge cases and correct misunderstandings, and they may help reduce abandonment in some contexts; teams should validate local effects with instrumentation rather than assume impact.

As an example of how human oversight and intervention can improve outcomes at scale, the United States Digital Service reports identifying and resolving a technical error that helped over 400,000 eligible children regain Medicaid coverage — a demonstration of active monitoring and intervention changing real-world results. Assisted support should be staffed and managed as a deliberate operational capability; procurement guidance such as the TechFAR Hub Learning Center offers a path to include service-level expectations and resourcing in contracts.

Commercial accounts also describe assisted patterns in high-profile pilots. According to Coforma, a private design firm that supported the IRS Direct File pilot, its team emphasized inclusive recruitment, bilingual content, and accessible patterns in the experience it describes. This is a vendor narrative and should be treated as illustrative rather than as evidence of impact; independent corroboration of effectiveness was not identified in the sources used here.

What gets measured gets improved; what gets published gets prioritized.

Measuring inclusion: uptake, completion, and processing time

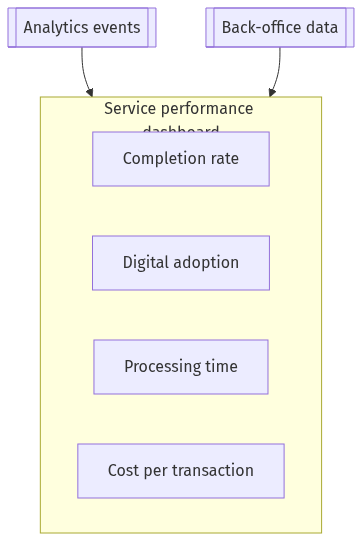

Outcome metrics shift focus from "is the page accessible" to "did people get the outcome they needed." Three core measures anchor this lens:

- Completion rate: the number of digital transactions users complete divided by all started digital transactions for the service.

- Digital adoption (uptake): the share of total service demand conducted digitally rather than through call centers, mail, or in-person channels.

- Processing time: the time from user submission to resolution; track a central value (for example, median) and outliers to spot bottlenecks.

Pair quantitative funnels with qualitative signals:

- Trust and perceived safety: brief, opt-in prompts at key steps can detect whether privacy notices are clear enough to sustain confidence; research shows privacy violations reduce trust.

- Assisted-channel insights: tag and track outcomes for users who receive a call-back or warm transfer to learn who benefits most and when to staff support.

Practical instrumentation tips for U.S. teams:

- Define the success event in plain language (for example, "Application submitted and accepted for review") and validate that analytics counts match back-office system counts for the same period.

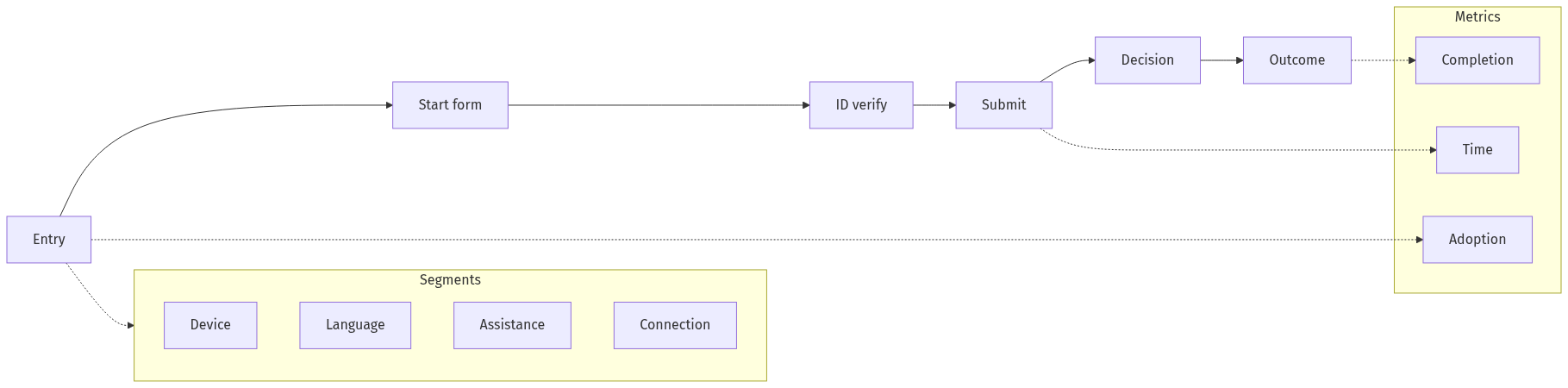

- Map the funnel: entry, start, key steps, submit, decision, outcome. Avoid opaque, vendor-specific event names; use human-readable labels so policy and operations staff can reason about the data.

- Segment by context: device type, connection hints, language, and assistance flags. Pew's data on smartphone dependence and broadband gaps justifies why these cuts matter.

- Run pre/post evaluations when shipping major changes; freeze metric definitions and compare cohorts.

- Govern the data: institute privacy-by-design reviews, data-minimization policies, and human oversight for automated decisions (INRIA/HAL).

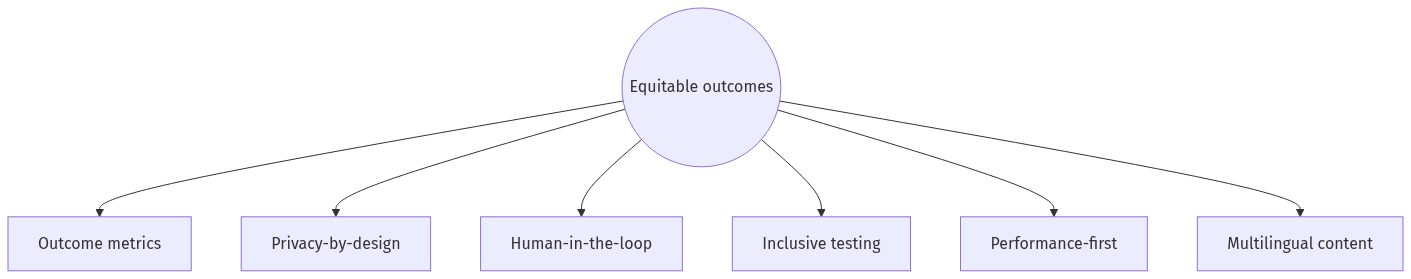

Spruce Technology's approach: designing for equitable outcomes

Spruce Technology's Digital Experience team emphasizes a wider lens in public-sector work: prioritizing measurable improvements in digital adoption, completion rates, and processing time, and embedding privacy-by-design, human-in-the-loop safeguards, inclusive testing, and performance-first engineering. These practices align with widely adopted public guidance, including the U.S. Web Design System on clear, adaptable, plain-language design, academic framing of privacy-by-design, and demographic evidence from the Pew Research Center that supports performance-first approaches for real-world devices and bandwidth.

- Outcome-oriented by default: Treat digital adoption, completion, and processing time as design goals and hold regular operational reviews on these numbers.

- Privacy-by-design and clear data use: Trust modeling, data minimization, and candid privacy notices help reduce abandonment due to uncertainty.

- Human-in-the-loop safeguards: Assisted channels and escalation policies focus on edge cases and low-digital-literacy users; program-level oversight and targeted fixes can unlock outcomes at scale.

- Inclusive testing and content: Recruitment spans languages, devices, ages, and literacy levels; content follows plain-language principles supported by federal design guidance.

- Performance-first engineering: Optimizing for low-bandwidth contexts directly serves smartphone-dependent segments documented by Pew.

Spruce Technology designs not for checkboxes but for measurable equity — the metrics are adoption, completion, and processing time, not merely a compliance report.

Short case study (composite): redesigning a benefits application

The following is a composite example, drawn from patterns and outcomes seen across public-sector programs, presented for illustration rather than as a single program's audited result.

Before: A benefits application shows a low completion rate and long processing times. Analytics indicate high drop-off on identity verification, especially for mobile users. Qualitative feedback suggests confusion about data use and poor translation quality for Spanish speakers.

Interventions: The team rewrites copy in plain language and adds progressive disclosure; replaces machine-only translation with human-reviewed Spanish content; ships a low-bandwidth stylesheet and defers nonessential scripts; adds a conspicuous, plain-English privacy summary with links to details; and introduces a call-back "warm transfer" to a caseworker for stalled users. The funnel is instrumented; outcomes are reviewed weekly with operations.

Illustrative outcome: Within one quarter of release, completion rises meaningfully among mobile users; adoption increases in Spanish-language sessions; and median processing time drops as fewer applications require rework due to errors. These are the types of shifts federal programs highlight when teams combine technical and human changes to remove systemic barriers (USDS).

Actionable recommendations for U.S. government teams

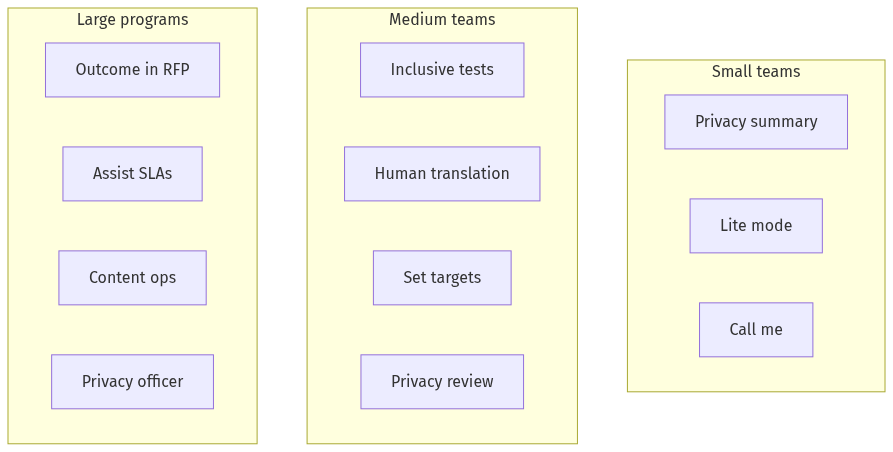

Small teams (weeks, low cost):

- Add a plain-language "privacy at a glance" box at the start of key flows; measure abandonment before/after. Research links privacy clarity to trust.

- Ship a low-bandwidth CSS/JS bundle behind a "lite mode" toggle; compare drop-off on slow networks using connection hints in analytics.

- Turn on a "call me" or live-chat escalation for stalled users during peak hours; tag outcomes to learn whether assisted users complete more often.

- Ensure baseline WCAG 2.1 AA compliance per DOJ's Title II rule as you iterate.

Medium teams (one to two quarters):

- Recruit for inclusive testing across languages, ages, devices, and bandwidth; use plain-language probes to capture trust signals (USWDS).

- Replace machine-only translation with human-reviewed content for your top non-English languages; heed W3C's cautions on automated outputs.

- Set service-level targets for completion and processing time; review monthly in operations.

- Establish a privacy review step with data-minimization checklists (INRIA/HAL).

Large programs (multi-quarter, governance and scale):

- Embed outcome metrics and reporting in procurement using TechFAR-aligned language so vendors must instrument funnels and deliver measurable improvements.

- Fund assisted channels with explicit escalation SLAs and staff training; instrument handoffs to quantify completion uplift.

- Invest in content ops — plain-language rewriting, style guides, and human translation — for your top languages; monitor completion by language and device over time.

- Create a privacy officer or working group to review data flows and publish plain-language privacy commitments.

Risks, trade-offs, and how to avoid common failures

- Over-reliance on AI for accessibility. Auto-generated alt text, captions, or translations can be wrong in subtle, exclusionary ways. W3C urges careful review of AI-generated outputs for accessibility and quality.

- Privacy harms from unnecessary data. Collecting sensitive data "just in case" raises breach risk and depresses trust; privacy-by-design calls for minimization, clear purpose specification, and user comprehension at the point of collection (INRIA/HAL; MDPI).

- Tokenistic testing. Limiting research to tech-savvy, English-speaking users on high-end devices will miss the very groups most at risk of exclusion. Federal design guidance and public research emphasize recruiting across language, device, and bandwidth contexts (USWDS; Pew: Digital Divide).

- Procurement optics over outcomes. Contracts that demand "508 compliance" reports without requiring funnel instrumentation and outcome improvements risk performative progress. TechFAR-aligned procurement can tie payment to measurable gains in completion or processing time.

Conclusion: aim for equitable outcomes, not just accessible interfaces

Compliance is the baseline; outcomes are the goal. Digital government should be judged by whether people complete what they came to do — quickly, safely, and with confidence — not by the number of audit checkmarks.

Public guidance already points the way: measure completion and adoption, reduce end-to-end time, and review results in operations. Real programs demonstrate that human oversight and targeted fixes can reverse harmful exclusions at scale (USDS). And the demographics of device ownership and connectivity make a performance-first, multilingual, privacy-conscious posture an equity imperative (Pew: Digital Divide; Pew 2024 report).

The future of digital government isn't compliant pages — it's equitable outcomes that prove everyone can complete what they need, with dignity and trust.

Sources

- ADA.gov. Fact Sheet: New Rule on the Accessibility of Web Content and Mobile Apps Provided by State and Local Governments. U.S. Department of Justice, 2024.

- GOV.UK Service Manual. Measuring completion rate: how to define and track service completion.

- Office for National Statistics (UK). Introducing our new performance dashboard. ONS Digital Blog, 2017.

- data.gov.uk. Performance Dashboard: Higher Level Stewardship — amendments (dataset overview).

- United States Digital Service. USDS by the numbers: 2024 Impact Report.

- U.S. Web Design System (USWDS). Transforming the American Digital Experience (PDF).

- Pew Research Center. Americans' use of mobile technology and home broadband, 2024.

- Pew Research Center. Digital Divide: Research and data resource hub.

- INRIA/HAL. Trust-Driven Strategies for Privacy by Design (report).

- Martin, K., et al. The penalty for privacy violations: How privacy violations impact trust. ScienceDirect.

- MDPI. The Effects of Applying Privacy by Design to Preserve Privacy and Personal Data Protection.

- W3C. Accessibility of Machine Learning and Generative AI (community note).

- TechFAR Hub / USDS. Learning Center: resources for digital service procurement.

- Coforma. Pioneering IRS Direct File — a Free E-Filing System for American Taxpayers (vendor case study; illustrative).

- tEQuitable. Work culture that works for everyone (company site; descriptive reference).

- U.S. Access Board. Final Regulatory Impact Analysis (ICT).

- NCBI/PMC. Accessibility analysis using WCAG 2.1: evidence from Indian e-government websites (peer-reviewed).